At the MoodleMoot recently I was asked a number of questions both directly and via twitter about the model that the OU is using in our VLE development - a blog posting seems like the best way to describe what we do.

The model isn't based on any formal methodology as such but has essentially evolved to meet the needs of the OU. There are certainly 'agile' elements in the process - at least in that we are constantly adjusting our developments to meet changing requirements from the OU community while still ensuring that we are able to thoroughly test developments before we release them to our student community.

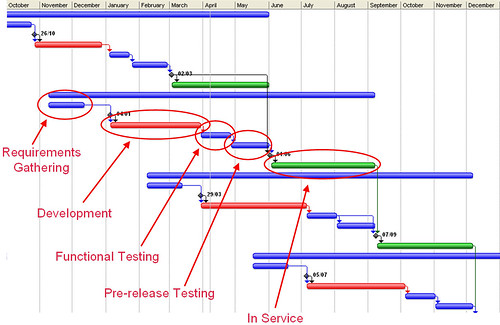

We now have a quarterly release cycle, and we try to minimise the number of changes between those releases. Each cycle covers a period of approximately ten months starting with a requirements gathering phase going through to a three month period when a particular release is ‘in service’.

1. Requirements Gathering. For a couple of years we have had a process (and activities) that encouraged the submission of requirements ahead of each of the four releases in the year. This lead to a lot of requirements being submitted, but the development team had difficulties in keeping the submitters informed about their requests. To improve on this we’re just on the point of rolling out a requirements gathering database into which any member of the University can enter a requirement, and then come back to the database later to see the progress in implementing their requirement, or indeed why it’s not been possible to address that requirement yet.

At a pre-announced date approximately six months before a release is due to go live, we pull together the most pressing requirements from the database and from other sources around the University and put together a development plan for the release.

The decisions here will be based on (i) operational requirements, for example changes needed to improve or preserve the VLE performance, (ii) addressing changing University strategic plans and (iii) the value of particular developments for large numbers of students.

We regard each development cycle as a distinct activity, so we reassess all the new and outstanding requirements at the start of each development cycle - this means that new requirements may (particularly if they are performance related or address a particular strategic target) displace requirements that have been in the "queue" for a long time.

2. Once the development priorities have been determined for the release, the development tasks will be allocated to the development teams (each team having one leading technical developer working with a number of technical developers). At this time we will prepare a development environment for the new release, merging the latest stable release from moodle.org with our code base. This then forms the underlying code base for the next three months development work. Each developer has their own development environment and is able, once they are happy with their development code (and it’s been reviewed by one of the lead developer), to commit changes into the CVS respository. One of the challenges with a large number of parallel developments is that changes made by one developer can impact on code committed by another developer - ensuring that all the developers are aware of the range of developments certainly helps, but we are currently experimenting with a continuous integration system that will highlight problems very early in the process.

3. At the end of the development period (usually 12 or 13 weeks), the developments for the release should be functionally complete - and the statement of what’s likely to be in release that was prepared at the start of the development period gets revised. At this point new developments get passed through to the testing team, where the new developments get tested to ensure that the functionality is as required (at this point we can start preparing the user documentation for these new features). Issues raised by the testing team get logged directly in the Bugzilla system used by the development teams.

4. About four weeks after the functional testing starts we will be at a point when the new release of the VLE can be put onto our acceptance test system (this is the first rehearsal for the upgrade). The new features will have been through one round of testing and bug-fixing and then integrated with the other new features into a release candidate. This is turned over to the testing team again for two weeks of intensive testing, followed by a window for bug-fixing, then a further week of verification testing. At this point there will be a further upgrade rehearsal, before at the real upgrade. By this point in the cycle we will also have release documentation in place, and the online Computing Guide (that’s available to all users) will have been updated to reflect changes in features.

5. The upgrades to the production servers happen the on first working Tuesday of March, June, September and December, ideally, during our advertised "at-risk" periods. On some occasions, typically if there are major database changes that require the database to be reloaded, we will need a longer downtime than the "at-risk" period allows, but we try to keep the interruption to a minimum (and we do advertise the interruption well in advance). We will almost always have a catch-up release seven days after the main release to allow patches that didn’t quite make the deadline get onto the live system.

6. Once the release has gone onto the production servers we try and minimise the number of changes to the system. If there are security patches needed these will happen as soon as possible, in other cases changes that are requested will be vetted to confirm if they really are needed urgently. A change to remedy a serious problem with an assessed activity is likely to be allowed, but a cosmetic change would be queued to be included with the next scheduled release.

7. The release will then stay in service for about three months, until the next release is ready to roll.

8. We also do regular reviews of both usability and accessibility. For new features we will factor in testing periods during development, this is in addition to incremental testing on the each release, just after it goes live, either by expert testers or by student testing panels. The outputs from this testing is fed back into the development process, with urgent changes being regarded as critical patches, and other less urgent changes being queued for the next scheduled release.

No comments:

Post a Comment